Zehao Zhao

1$^{st}$ Place Solution of WWW 2025 EReL@MIR Workshop Multimodal CTR Prediction Challenge

May 06, 2025

Abstract:The WWW 2025 EReL@MIR Workshop Multimodal CTR Prediction Challenge focuses on effectively applying multimodal embedding features to improve click-through rate (CTR) prediction in recommender systems. This technical report presents our 1$^{st}$ place winning solution for Task 2, combining sequential modeling and feature interaction learning to effectively capture user-item interactions. For multimodal information integration, we simply append the frozen multimodal embeddings to each item embedding. Experiments on the challenge dataset demonstrate the effectiveness of our method, achieving superior performance with a 0.9839 AUC on the leaderboard, much higher than the baseline model. Code and configuration are available in our GitHub repository and the checkpoint of our model can be found in HuggingFace.

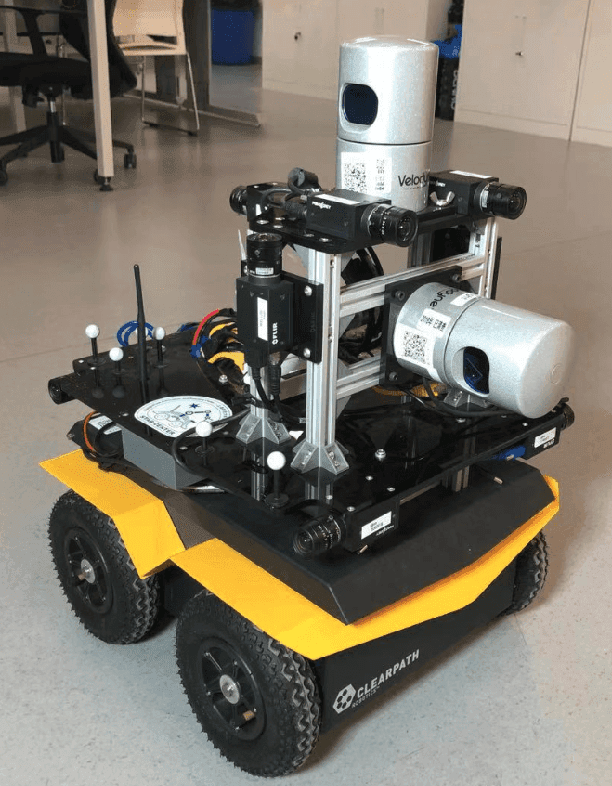

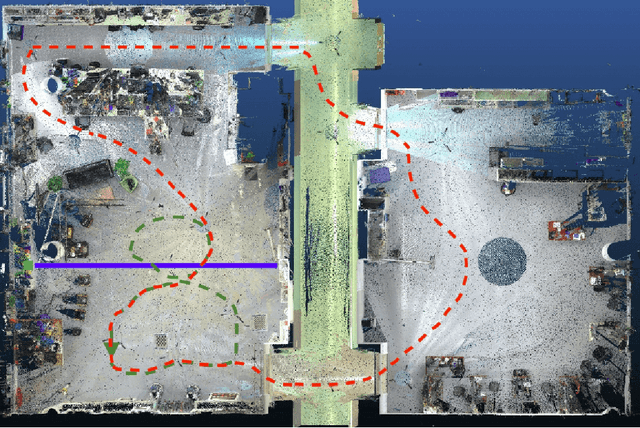

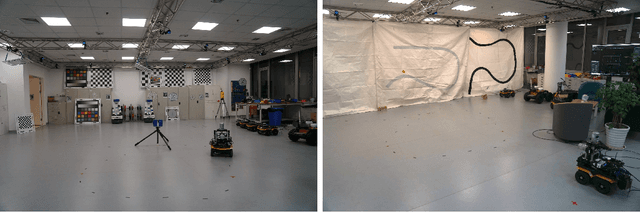

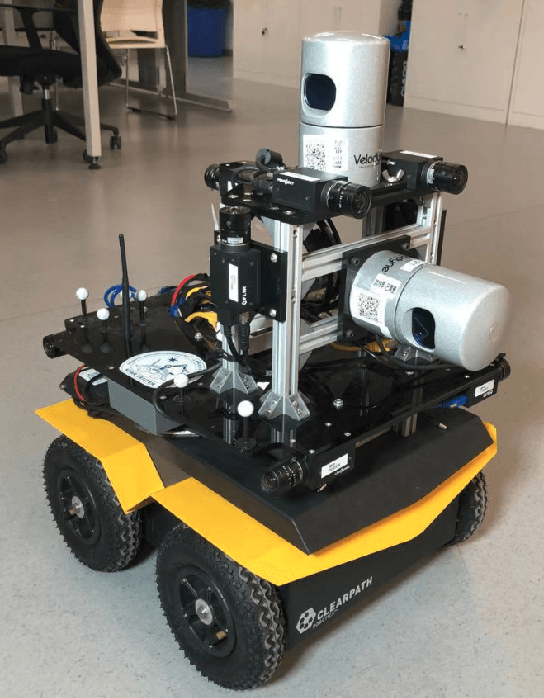

Advanced Mapping Robot and High-Resolution Dataset

Jul 23, 2020

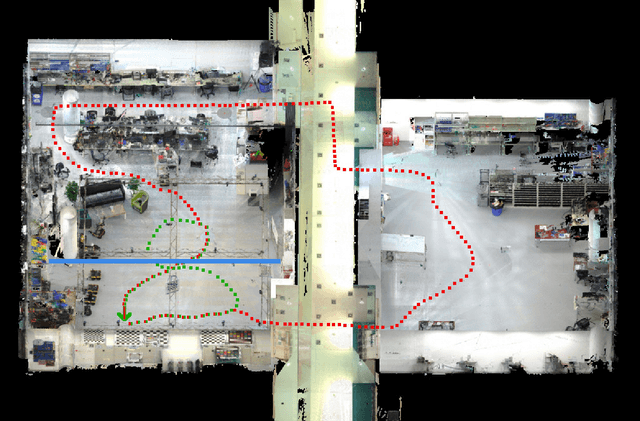

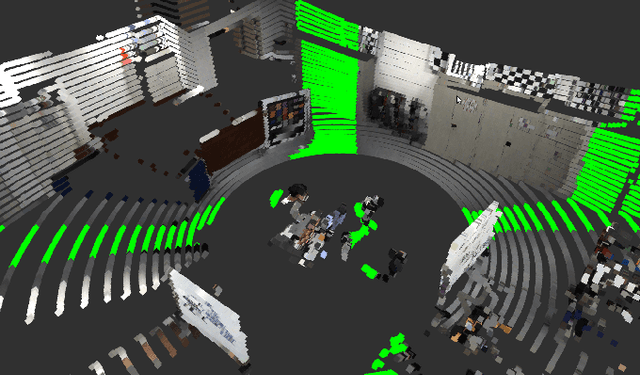

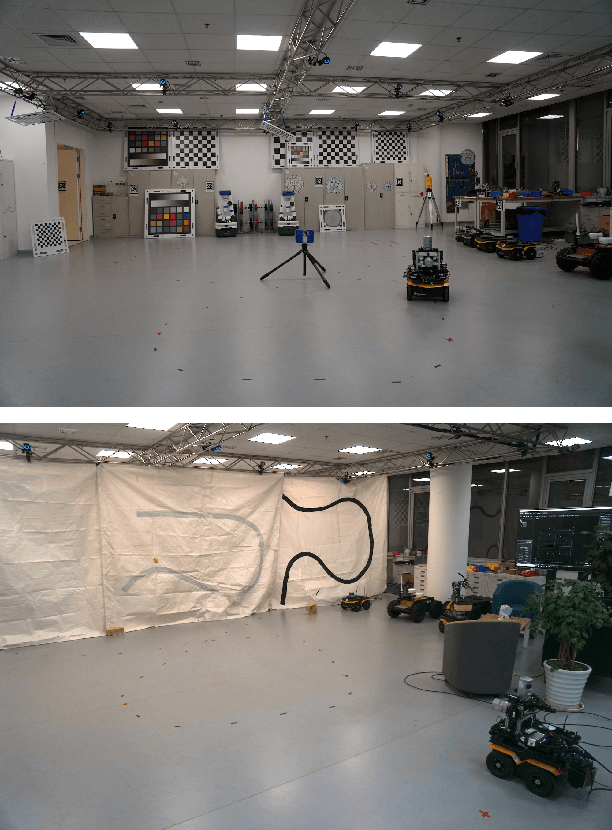

Abstract:This paper presents a fully hardware synchronized mapping robot with support for a hardware synchronized external tracking system, for super-precise timing and localization. Nine high-resolution cameras and two 32-beam 3D Lidars were used along with a professional, static 3D scanner for ground truth map collection. With all the sensors calibrated on the mapping robot, three datasets are collected to evaluate the performance of mapping algorithms within a room and between rooms. Based on these datasets we generate maps and trajectory data, which is then fed into evaluation algorithms. We provide the datasets for download and the mapping and evaluation procedures are made in a very easily reproducible manner for maximum comparability. We have also conducted a survey on available robotics-related datasets and compiled a big table with those datasets and a number of properties of them.

Towards Generation and Evaluation of Comprehensive Mapping Robot Datasets

May 23, 2019

Abstract:This paper presents a fully hardware synchronized mapping robot with support for a hardware synchronized external tracking system, for super-precise timing and localization. We also employ a professional, static 3D scanner for ground truth map collection. Three datasets are generated to evaluate the performance of mapping algorithms within a room and between rooms. Based on these datasets we generate maps and trajectory data, which is then fed into evaluation algorithms. The mapping and evaluation procedures are made in a very easily reproducible manner for maximum comparability. In the end we can draw a couple of conclusions about the tested SLAM algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge